Rendering Bases

Starting From Scratch

As you may have seen in last week's post, my engine doesn't really shine by its graphic capabilities. When I started it a few years back I eventually went through the famous Learn OpenGL tutorial to try to make things somewhat pretty. After a few months of work over the weekends, it could load 3D models and do some basic shading. I had even implemented Valve's famous SDF text rendering (for scaling text at no additional memory cost) out of curiousity.

But my intentions have never been to make the next gen graphics game, so I put those efforts on the ice for the past two years, setting my focus primarily on original physics and gameplay. However, in a recent attempt to make good progress on my game and start sharing it with others, I thought this week was time to start implementing rendering in my game again, from scratch so I could apply everything I learned since.

The Pipeline

Requirements

As per my previous post, the game I am working on will require various features having an impact on rendering pipeline decisions. Among those are:

- Procedurally generated map ? no / limited backing (lights, etc.)

- Nightly urban environment ? many lights

- Transparency

- ECS friendly, off course

Forward vs Deferred

I started the week with limited knowledge on what my options were. I had heard about a few keywords, such as Forward and Deferred, but didn't really know what they entailed. Upon searching here and there, I stumbled upon a short but great video explaining the differences between forward and deferred rendering.

In short, 3D games started with forward rendering pipelines:

For each mesh:

For each light:

render(mesh, light)

postProcess()

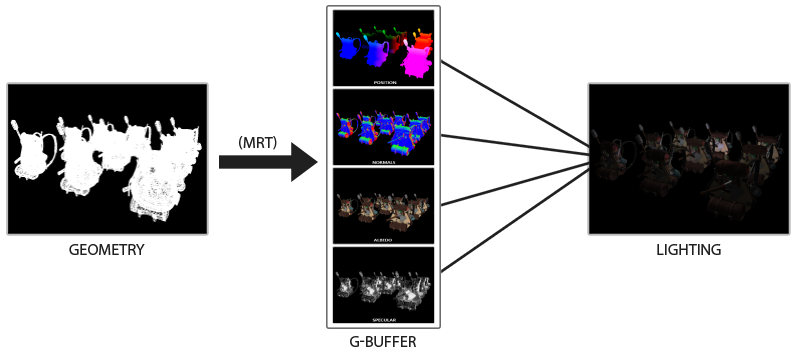

But eventually, with the arising need for more lights in games, came the idea to do rendering in two passes:

For each mesh:

render(mesh) // Updates G-Buffer (stores per pixel: albedo (unshaded color), normal, depth, etc.) on GPU, not really rendering anything

For each light:

render(light) // On entire screen, uses G-Buffer to know how light should affect each pixel

The deferred pipelines took over the past 15 years as they were very efficient. However they came with limitations: 1) G-Buffer needing a lot of space on graphics card, it cannot contain too much information and thus this limits how many types of materials can be used for shading meshes (they must all work from the same data stored in this G-Buffer) and 2) transparent meshes are difficult, they must be rendered after deferred pass (else they would affect depth buffer and hide what is behind them) in a forward way and with limited shading options (since light are rendered in deferred pass). Hence the recent resurgence of forward rendering pipelines, supported by the many algorithmic improvements of past decades which makes them easier to work with while equally efficient.

Decision

After weighting my needs against those two solutions, I decided to opt for the newer forward+ style. It seems easier to iterate from while supporting my needs for use of many lights and trasparent materials.

A Weekend of Work

This time, and unlike 2 or 3 years ago when I first started rendering, I was able to use AI on my journey. Being able to shoot any question at an LLM (and get a somewhat accurate answer) is a game changer when learning a new skill. If I'm lost or don't know where to start, I can ask it what the industry standard is for X or Y. Off course it will make mistakes, a lot, but the time saved is undeniable.

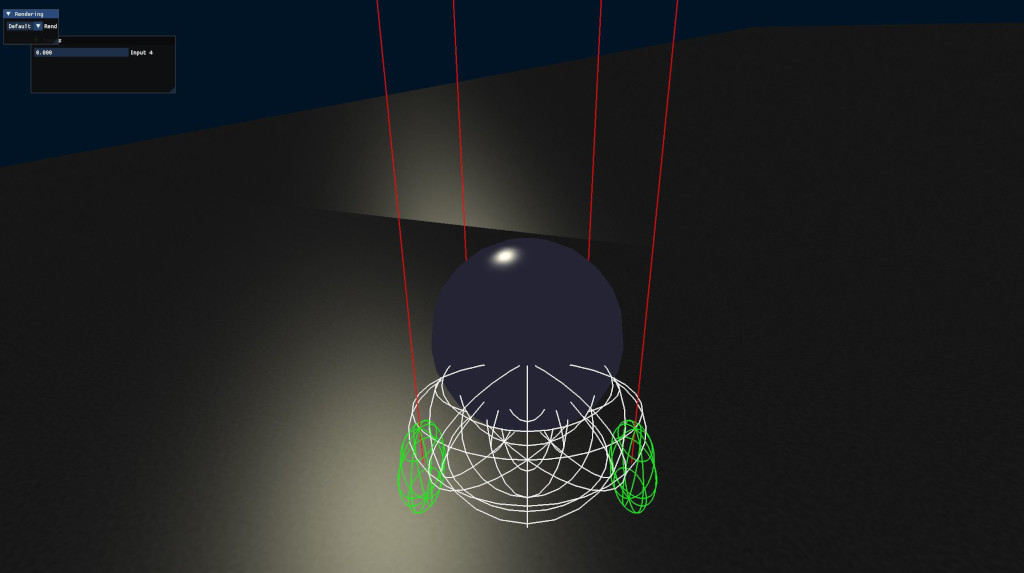

I kept the base of my engine's rendering which was already integrated to the ECS: a system binds the scene's framebuffer, another draws meshes onto it, then a third binds the window's framebuffer, for a fourth to do post process. If it seems complicated it's also because I designed the engine to later be used within the editor or without post processing. So rendering is split in many bricks I can add or remove. And this weekend's challenge was to replace the mesh rendering step by something more up-to-date. Here is the results:

Obviously this is very early still and not everything has been implemented in the little time I had. One thing that took me a while to figure was how to support an arbitrary amount of (maybe user-driven) materials to allow shading special models in very unique ways. Here is a gist of what is done and left to do:

- Setup camera

- Prepare meshes for rendering (cull & organize them per material and properties to minimize GPU calls)

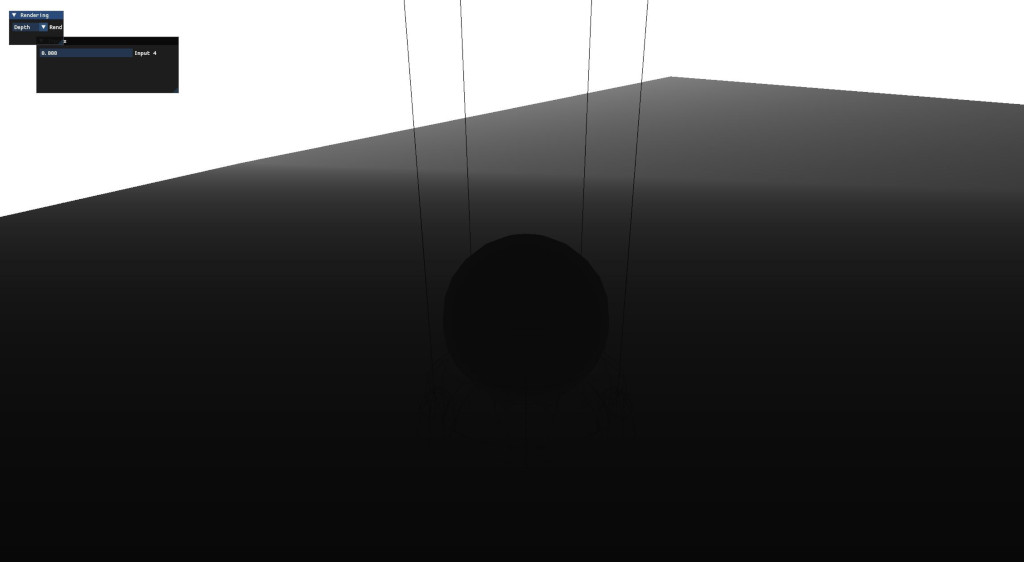

- Render depth pre-pass (to avoid redrawing over expensive shader outputs)

- Build light clusters (TODO / WIP)

- Render opaque meshes

- Render transparent meshes (TODO)

- Skybox, bloom, tone map, ... (TODO)

I am especially happy of how I structured my shaders in a way to support customization and performance: draw calls are grouped per material (shader) and material instance (a specific set of uniform values for a given shader that can be shared by multiple draw calls). This is probably already standard (or worse than top of the art) but at least I will have tried.

To next Sunday for more progress! I leave you on this last feature I implemented to debug the state of my depth buffer: